Introduction

Data is the key asset that developers use to train machine learning (ML) systems. However, preparing ML data for large-scale projects can be a time-consuming and resource-intensive task. Traditional methods often involve manual cleaning, labeling, and feature engineering, which can become a bottleneck in the development process.

Programmatic methods, such as using Python libraries like Faker, can be used for simple synthetic data generation tasks, but it fails when being used to mimic real-world contexts. Faker primarily focuses on generating generic data types like names, addresses, and companies, and works for simple data generation tasks. However, the moment a programmer tries to mimic real-world scenarios, such as generating hundreds of customer reviews in various languages, Faker is ineffective.

In this article, we will showcase how LLMs can be effectively used to generate ML data at scale. We will use open-source LLMs that can be run on cloud infrastructure. The advantage of this approach is that it is far more cost-effective in the long run. Think about it - if you had to use an LLM API and pay per token, you would end up with a massive bill when the dataset you want to generate is large. On the other hand, with open-source LLMs hosted on a cost-effective infrastructure like E2E Cloud, your cost remains fixed even if you run the data generation pipeline 24x7 for the entire month. This leads to huge cost savings in the long run, and offers you far more control over your pipeline.

Why do LLMs work so well as data generation systems? The reason is simple – LLMs have been trained on internet-scale data, and therefore, have the capability of capturing domain-specific knowledge and relationships between entities. This allows them to generate data that is not only realistic but also adheres to the specific context and terminology of your field.

Technology Architecture

For this showcase, we will use Ollama, a simple yet powerful framework for getting any LLM up and running quickly. We will also use LangChain, which integrates with Ollama nicely, and therefore allows us to execute LLM queries and generate responses. As far as the LLM is concerned, we will use Mistral 7B. However, we will also show how you can shift the LLM around to another one later, and perform the same task, thus giving you flexibility in choosing the right LLM for the task.

Mistral 7B is a powerful open-source LLM designed for efficiency and performance, and beats Llama2 in several tasks and, therefore, is our LLM of choice in this experiment. Also, beyond strong performance in general language tasks, Mistral 7B exhibits particular strength in code generation, and is effective for us therefore. An alternative we could have used is Code Llama.

Problem Statement

We will take the problem statement of generating realistic customer reviews around products. This data can be effectively used to train LLMs later, which can be used to build chatbots for customer service representatives, or review analytics models.

Let’s say that our data is about customer reviews around all smartphones on a marketplace. To start with, we will generate the following fields of data, which can later be used for training ML models.

Customer name: Name of the customer.

Customer review: Review left by the customer. Can be in English or Hinglish. Can be between one sentence to two paragraphs.

Screen quality: Rated on a scale of 1 to 5.

Value for money: Rated on a scale of 1 to 5.

Battery life: Rated on a scale of 1 to 5.

Gaming: Rated on scale of 1 to 5.

Let’s say we want to generate this data in CSV format.

Step 1: Launch GPU Node

To start with, launch a GPU node on E2E Cloud. Head over to Myaccount first, sign in and register, and then head to Compute.

There’s a big reason to choose GPU nodes on E2E Cloud instead of other platforms — cost. You can compare this for yourself with other hyperscalers; GPU nodes on E2E Cloud cost a fraction of what they cost on other platforms.

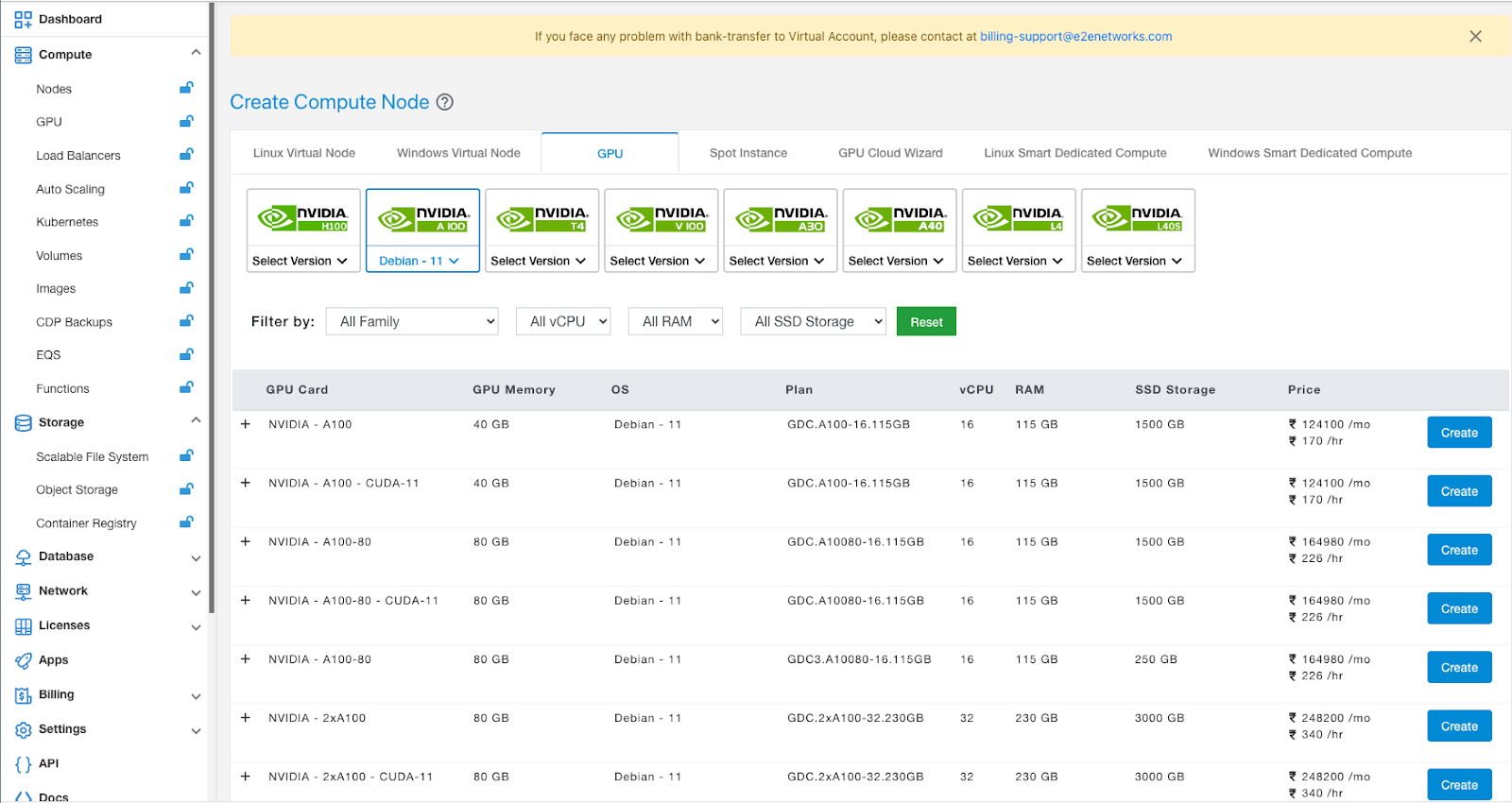

Once you are in the Compute tab, you can click on GPU, and then select Debian 11. You will see something like this:

Pick the GPU node of your choice and click on Create. For trying out a range of LLMs, we recommend an A100 or H100 GPU. However, if you want to just test it out, you could also go with a V100.

When you are launching the node, make sure you add your SSH key. This will simplify your life later.

Also, we recommend downloading VS Code Remote Explorer extension, which allows you to SSH into your node easily, and use that as a development environment.

Once you SSH into the node, run the following command:

$ nvidia-smi

This will tell you if it detected a GPU and how much GPU memory you have available. Later, you can also use this to learn about the processes that are hogging your GPU memory and manage them accordingly.

Step 2: Install Ollama and Run Mistral 7B

First, install Ollama.

curl -fsSL https://ollama.com/install.sh | sh

Then, the task of running Mistral 7B boils down to simply executing:

$ ollama pull mistral

$ ollama run mistral

You will see a prompt come up, and you can even send queries to the LLM. Great! Let’s test it once.

>>> Can you write a simple function to generate a matrix of 10 x 10 with digits from 1 to 10 for each cell?

Sure! Here's an example of how to create a 10x10 matrix using Python and filling it with numbers from 1 to 10:

```python

def create_matrix():

matrix = [[0]*10 for _ in range(10)]

for i in range(10):

for j in range(10):

matrix[i][j] = i * 10 + j + 1

return matrix

matrix = create_matrix()

for row in matrix:

print(row)

This function ***`create_matrix()`*** initializes a list of 10 lists (matrices) and then iterates over the rows and columns to fill each cell with its corresponding number. The numbers are calculated based on the position of the cell, which is represented by the row and column indices ***`i`*** and ***`j`***.

Finally, the function returns the created matrix that can be printed out for verification.

Now, let’s try this on the CSV custom data generation task – of generating customer reviews as we have discussed before. We will use the Ollama prompt to send queries to Mistral 7B.

Prompt:

```python

> > > I need to generate 50 rows of customer review data for a product named 'QQQ Smart Galaxy Phone'.

The data generated should be in CSV format, and have the following fields -

1. customer review text in English or Hinglish,

2. value for money rating in 1 to 5,

3. screen quality rating in 1 to 5,

4. phone power rating in 1 to 5,

5. battery life rating in 1 to 5,

6. delivery experience rating in 1 to 5.

Make sure the reviews are varied and diverse, and ratings cover all scenarios. Before the first row of data, put a word 'START', and at the end of data put the word 'END' to signal start and end of your data.

With the above prompt, this is the output that Mistral generates:

START, customer review text, value for money rating, screen quality rating, phone power rating, battery life rating, delivery experience rating

- "I recently purchased the QQQ Smart Galaxy Phone and I am blown away by its performance! The value for money is excellent as it offers high-end features at an

affordable price. I rate the screen quality a 5, it's vibrant and clear, and the phone power is impressive, lasting all day with moderate usage. The battery life

is also fantastic, rating it a 5. Delivery was quick and efficient.", 5, 5, 5, 5, 5

- "The QQQ Smart Galaxy Phone arrived damaged, which was disappointing as the delivery experience was not up to the mark. The value for money isn't great for me

because I had to pay extra for the screen repair. The screen quality is average and the phone power needs improvement. However, the battery life rating is 5, which

is a saving grace.", 2, 3, 4, 5, 5

- "I am thrilled with my new QQQ Smart Galaxy Phone! It's an excellent device for multitasking and the value for money is superb. The screen quality is

outstanding and the phone power is sufficient for my needs. However, I rate the battery life a 3 as it doesn't last long, especially during heavy usage.", 5, 5, 4,

3, 3

- "The QQQ Smart Galaxy Phone has an amazing camera that takes stunning photos! The value for money is excellent considering its features. I rate the screen

quality a 4 as it could be more vibrant, but the phone power is good and battery life lasts long. Delivery was on time.", 5, 4, 4, 4, 5

- "I've had my QQQ Smart Galaxy Phone for only a week, but I'm already impressed with its performance! The value for money is great as it offers high-end features

at an affordable price. The screen quality and phone power are excellent, rating them both a 5. However, the battery life could be better.", 5, 5, 5, 3, 4

- "The QQQ Smart Galaxy Phone arrived late and in poor condition. I rate the value for money as poor because of the additional cost for expedited delivery. The

screen quality is below average, and both phone power and battery life need improvement. Delivery experience was disappointing.", 1, 2, 3, 3, 2

- "I'm really happy with my purchase of the QQQ Smart Galaxy Phone! The value for money is excellent as it offers high-end features at an affordable price. I rate

all aspects, screen quality, phone power, battery life and delivery experience, a perfect 5.", 5, 5, 5, 5, 5

- "I was not satisfied with the QQQ Smart Galaxy Phone's value for money as it did not meet my expectations in terms of screen quality and phone power. The

battery life is good and delivery experience was efficient.", 3, 3, 4, 5, 4

- "The QQQ Smart Galaxy Phone's camera and processing speed are fantastic! I rate the value for money as excellent since it offers high-end features at a

reasonable price. The screen quality could be improved but phone power is sufficient.", 5, 4, 4, 4, 5

- "The QQQ Smart Galaxy Phone arrived damaged and took weeks to get repaired under warranty. I rate the value for money as poor due to the additional cost and

time spent on repairs. The screen quality is average and battery life could be better.", 2, 3, 4, 3, 4

[and 40 more rows…]

The data looks to the point and accurate. If you had to achieve the same using Faker or other similar libraries, you would have had to generate each of the review sentences accurately, which itself is a complicated process. LLMs do a great job with tasks like these.

Now, let’s do this programmatically.

Step 3: Programmatically Generating ML Data

You have three options if you want to do this programmatically. Let’s first set up the environment.

You can use conda, venv, or whatever you prefer. To keep things simple, we will use venv and create the environment in a .venv folder.

$ python -m venv .venv

$ source .venv/bin/activate

$ pip install requests

Option 1: Using REST API Provided by Ollama

Ollama provides a great REST API. First, you can test it out in the following way:

curl http://localhost:11434/api/generate -d '{

"model": "llama2",

"prompt": "Write a function to multiply two matrices"

}'

Now, if you had to turn this into a Python program which generates customer reviews, you could do the following:

```python

import json

import requests

# Set up the request parameters

url = "http://localhost:11434/api/generate"

prompt = “””

I need to generate 50 rows of customer review data for a product named 'QQQ Smart Galaxy Phone'.

The data generated should be in CSV format, and have the following fields -

1) customer review text in English or Hinglish,

2) value for money rating in 1 to 5,

3) screen quality rating in 1 to 5,

4) phone power rating in 1 to 5,

5) battery life rating in 1 to 5,

6) delivery experience rating in 1 to 5.

Make sure the reviews are varied and diverse, and ratings cover all scenarios. Before the first row of data, put a word 'START', and at the end of data put the word 'END' to signal start and end of your data.

“””

headers = {

'Content-Type': 'application/json',

}

data = {

"model": "mistral",

"prompt":"Write a function to multiply two matrices"

}

# Send the request

response = requests.post(url, json=json.dumps(data), headers=headers)

# Check if the request was successful

if response.status_code == 200:

print("Request succeeded with status code:", response.status_code)

# Process the response data here

else:

print("Request failed with status code:", response.status_code)

### Option 2: Alternatively, You Could Use Ollama Python Client Library

For this, you would need to install the client library first:

```python

$ pip install ollama

Next, here’s the Python script that does the work:

import ollama

prompt = “””

I need to generate 50 rows of customer review data for a product named 'QQQ Smart Galaxy Phone'.

The data generated should be in CSV format, and have the following fields -

1. customer review text in English or Hinglish,

2. value for money rating in 1 to 5,

3. screen quality rating in 1 to 5,

4. phone power rating in 1 to 5,

5. battery life rating in 1 to 5,

6. delivery experience rating in 1 to 5.

Make sure the reviews are varied and diverse, and ratings cover all scenarios. Before the first row of data, put a word 'START', and at the end of data put the word 'END' to signal start and end of your data.

“””

response = ollama.chat(model=’mistral’, messages=[

{

'role': 'user',

'content': prompt,

},

])

print(response['message']['content'])

Option 3: LangChain with Ollama

If you are building a full AI application, it might make sense to use Ollama’s integration with LangChain as your way to harness Mistral 7B. Here’s how you can do that.

$ pip install langchain

from langchain_community.llms import Ollama

prompt = “””

I need to generate 50 rows of customer review data for a product named 'QQQ Smart Galaxy Phone'.

The data generated should be in CSV format, and have the following fields -

1. customer review text in English or Hinglish,

2. value for money rating in 1 to 5,

3. screen quality rating in 1 to 5,

4. phone power rating in 1 to 5,

5. battery life rating in 1 to 5,

6. delivery experience rating in 1 to 5.

Make sure the reviews are varied and diverse, and ratings cover all scenarios. Before the first row of data, put a word 'START', and at the end of data put the word 'END' to signal start and end of your data.

“””

llm = Ollama(model="mistral")

llm.invoke(prompt)

As you can see, all the three approaches are valid, and give you simple ways to generate ML data quickly. If you loop through the generation process, you can easily get millions of rows of data points that you can later harness for other purposes.

What Kinds of ML Data Can You Generate?

LLMs are incredibly powerful tools. Once you have your stack set up, you will see that you can effectively leverage it for all sorts of data generation tasks. Here are the categories:

1. Text Data

Realistic and Grammatically Correct Text: LLMs can generate realistic sentences, paragraphs, and even longer forms of text that adhere to grammatical rules and stylistic elements. This can be used for tasks like creating training data for chatbots, dialogue systems, or sentiment analysis models.

Specific Content Types: LLMs can be fine-tuned to generate specific content formats like code, scripts, emails, poems, and more. This makes them valuable for tasks like code generation, content creation, and data augmentation.

Multilingual Data: Many LLMs are trained on multilingual datasets, allowing them to generate text in various languages. This can be beneficial for developing applications supporting multiple languages.

2. Code Data

Functional and Syntactically Correct Code: Some LLMs, like Mistral 7B, excel at generating functional code in various programming languages. This can be used for tasks like code completion, automation, or testing, and eventually creating data that can be used to train future code-specific LLMs.

3. Tabular Data

Structured Data: LLMs can be trained to generate structured data in the form of tables, with rows and columns containing specific types of information. This can be helpful for creating synthetic datasets for training various machine learning algorithms.

4. Synthetic Data

Augmenting Existing Datasets: LLMs can be used to generate synthetic data that resembles real-world data but doesn't contain sensitive information. This can be beneficial for expanding existing datasets and improving the generalizability of ML models.

Final Note

In this article, we have demonstrated how LLMs can be used effectively to generate realistic ML data that can be further used for training, generating synthetic datasets, and other tasks. We have used Mistral 7B, but we could have also used other LLMs such as Code Llama, simply by switching the LLM that Ollama is using.

Very soon, most large and middle-sized enterprises, especially ones working in the AI domain, would be using LLMs for generation of data. Using a powerful cloud GPU node will come in handy; and combined with an effective workflow, it will reduce the effort required by a programmer in generating ML data. If you are a developer looking to try this out, we would love to hear from you. Please feel free to reach out to us at sales@e2enetworks.com.