Introduction to Video AI

In recent years, artificial intelligence (AI) has revolutionized the world of video analysis, enabling the development of embedded video products like smart cameras and intelligent digital video recorders with automation capabilities that once required human monitoring. This technology goes beyond video compression, which reduces video size and focuses on extracting meaningful and relevant information from digital video, a field known as video analytics or video content analysis (VCA).

Video AI solutions build upon computer vision, pattern analysis, and machine intelligence research, spanning various industries such as surveillance, retail, and transportation analytics. AI and machine learning have emerged as key drivers in unlocking value from video data, automating and enhancing analytical operations across different domains.

With the deployment of AI development services for video analytics and the integration of IoT devices and applications, the generation and processing of data from video sources have become more accessible. AI and deep learning models can be trained with extensive video footage to automatically identify, classify, tag, and label specific objects within the video streams.

How AI Enables the Video Surveillance Market

The video surveillance market has experienced a significant transformation with the introduction of AI-powered video analysis. This technology promises a more efficient and effective approach to monitoring, offering real-time detection of temporal and spatial events in videos. AI-driven video analytics holds the potential to reshape various industries, including manufacturing and automotive, by enhancing productivity and reducing operational challenges.

Benefits of Video AI for Real-Time Insights

Monitoring and maintaining surveillance systems, especially with a large number of cameras, can be a daunting task. Video analytics offers a solution by employing complex algorithms to analyze recorded video streams comprehensively. It reviews camera images pixel by pixel, minimizing the chance of missing critical details. Furthermore, video analytics can be tailored to specific security or business requirements, applying filters intelligently to focus on relevant information.

According to the Artificial Intelligence Global Surveillance Index, at least 75 out of 176 countries worldwide actively use AI-based surveillance technologies, demonstrating the widespread adoption of AI in the surveillance sector.

Top Challenges in Video AI

Despite its many advantages, video AI also faces some challenges:

- Data Volume: The amount of data collected from video analysis tools continues to grow, posing challenges in terms of data storage and processing.

- Human Resources: The effectiveness of CCTV monitoring systems is dependent on the ability of human resources to handle the vast amount of data generated.

- Security: With the increasing number of hacking and internet breaches, the security of CCTV surveillance systems is a critical concern for organizations.

Navigating these challenges can be daunting for consumers seeking technology solutions that align with their needs and deliver successful outcomes.

Technology Stack for Video AI

Video analytics involves several key tasks, including object detection, object recognition, object tracking, real-time video analytics, and triggering real-time alerts. These tasks rely on a technology stack that includes libraries such as OpenCV for image processing and TensorFlow for high-precision object detection. Let's explore these key tasks:

5.1 Object Detection: This computer vision task identifies and locates objects in an image or video, enabling object counting and precise positioning.

5.2 Object Recognition: Object recognition relies on deep learning and machine learning algorithms to quickly identify objects, scenes, and visual information within images or video streams.

5.3 Object Tracking: Object tracking is essential in monitoring objects as they move through a sequence of frames in computer vision applications, including sports analysis and surveillance.

5.4 Real-Time Video Analytics: Video cameras generate vast amounts of data, making manual reviews impractical. Real-time analytics processes this data and provides insights in real-time.

5.5 Triggering Real-Time Alerts: Real-time alerts are crucial for situational awareness. These alerts can be based on appearance similarity, count-based criteria, or even facial recognition technology.

Top Industrial Applications of Video AI

AI-based video analysis finds applications in various industries:

Healthcare: Video analytics can be used to monitor patient and guest flow, reducing wait times and optimizing entry to emergency areas.

Smart Cities/Transportation: Video analytics plays a vital role in traffic control, reducing accidents and congestion in urban areas.

Retail: Brick-and-mortar stores can use video analytics to understand customer behavior and improve marketing strategies.

Security: Facial and license plate recognition systems enhance security by quickly identifying individuals and vehicles, enabling real-time decision-making.

Top Use Cases of Video AI

Here are some key use cases of video analytics:

- Face Recognition: Face recognition technology identifies individuals based on facial features, allowing for ID verification and alert generation for unknown individuals.

- Behavior Detection: Behavior detection monitors individuals' actions within a facility and triggers alerts if suspicious behavior is detected.

- Person Tracking: Person tracking involves assigning IDs to individuals in video frames, allowing for monitoring and tracking their movements within a facility.

- Crowd Detection: Crowd analysis and detection are crucial for surveillance and scene understanding, especially in crowded environments.

- People Count/Presence: Video analytics can track attendance and the number of people in a building, providing valuable information for various applications.

- Time Management: Time management features help monitor individuals' activity and send alerts when people exceed their allotted time in a specific area.

- Zone Management and Boundary Detection: Video analytics can track entry into restricted areas, providing insights into who enters these areas and when.

How AI-Powered Video Analytics Works

The implementation of AI-powered video analytics solutions can vary depending on the specific use case. Generally, there are two approaches: real-time analysis and post-processing.

- Real-Time Analysis: In this approach, the system is configured to trigger alerts for specific events as they occur in real-time. This provides immediate situational awareness and response capabilities.

- Post-Processing: Post-processing involves running specialized searches on recorded content to enable forensic investigations after incidents have occurred.

Getting Started with Video AI

To start with Video AI, you'll need to:

- Choose a Framework: Select a video analysis framework or library, such as OpenCV, TensorFlow, or PyTorch, depending on your project's requirements.

- Collect and Annotate Data: Gather a dataset of videos relevant to your application and annotate it for training your models.

- Use Pre-trained Models: Utilize pre-trained models for tasks like object detection, action recognition, or face recognition to jumpstart your project.

- Customization: Fine-tune pre-trained models or build your own deep learning models to meet specific requirements.

- Deployment: Deploy your Video AI system to the cloud or edge devices as needed.

- Monitoring and Maintenance: Regularly monitor and maintain your Video AI system to ensure its continued accuracy and relevance.

Hands-on Use Case

Object Detection in Surveillance

Before we get into the code example, let us get an overview of the popular Object Detection algorithm, YOLO (You Only Look Once):

- Weights File (.weights):

- This file contains the trained neural network model's weights. These weights are the result of training YOLO on a large dataset, like COCO (Common Objects in Context), to recognize various objects in images. The weights file is typically quite large and is crucial for making predictions using the pre-trained YOLO model.

- Configuration File (.cfg):

- The configuration file contains the architecture and hyperparameters of the YOLO model. It specifies details like the number of convolutional layers, filters, anchor boxes, and other network settings. The configuration file is necessary to recreate the YOLO architecture and configure the model correctly. Different YOLO variants (e.g., YOLOv3, YOLOv4) have different configuration files tailored to their specific architectures.

- COCO Names File (.names):

- This is a simple text file that contains the names of the objects that the YOLO model was trained to detect using the COCO dataset. Each line in the file represents the name of a different object class, such as "person," "car," "dog," etc. The names file helps map the numerical class predictions made by the model to human-readable class labels, making the output of the model more interpretable.

These files are essential components to working with YOLO for object detection tasks. You typically need the weights file to load a pre-trained model, the configuration file to define the model architecture, and the names file to interpret the model's output predictions.

Code Example (using Python and OpenCV):

import cv2

# Load pre-trained object detection model

# Please specify the path for yolo weights and cfg file if required

net = cv2.dnn.readNet("yolov3.weights", "yolov3.cfg")

# Load classes and configure network

# Here also, do specify file path if required

classes = open("coco.names").read().strip().split("\n")

layer_names = net.getLayerNames()

output_layers = [layer_names[i - 1] for i in net.getUnconnectedOutLayers()]

# Load video feed

cap = cv2.VideoCapture(0)

while True:

ret, frame = cap.read()

# Perform object detection here

# Display results on frame

cv2.imshow("Object Detection", frame)

if cv2.waitKey(1) & 0xFF == ord("q"):

break

cap.release()

cv2.destroyAllWindows()

Note: Click here to download yolo weights, config file and coco.names.

Video Summarization

Code Example (using Python and moviepy library):

from moviepy.video.io.VideoFileClip import VideoFileClip

# Load video

video = VideoFileClip("input_video.mp4")

# Generate video summary

summary = video.subclip(start_time, end_time)

# Save summary

summary.write_videofile("video_summary.mp4") Note: Add movie path accordingly

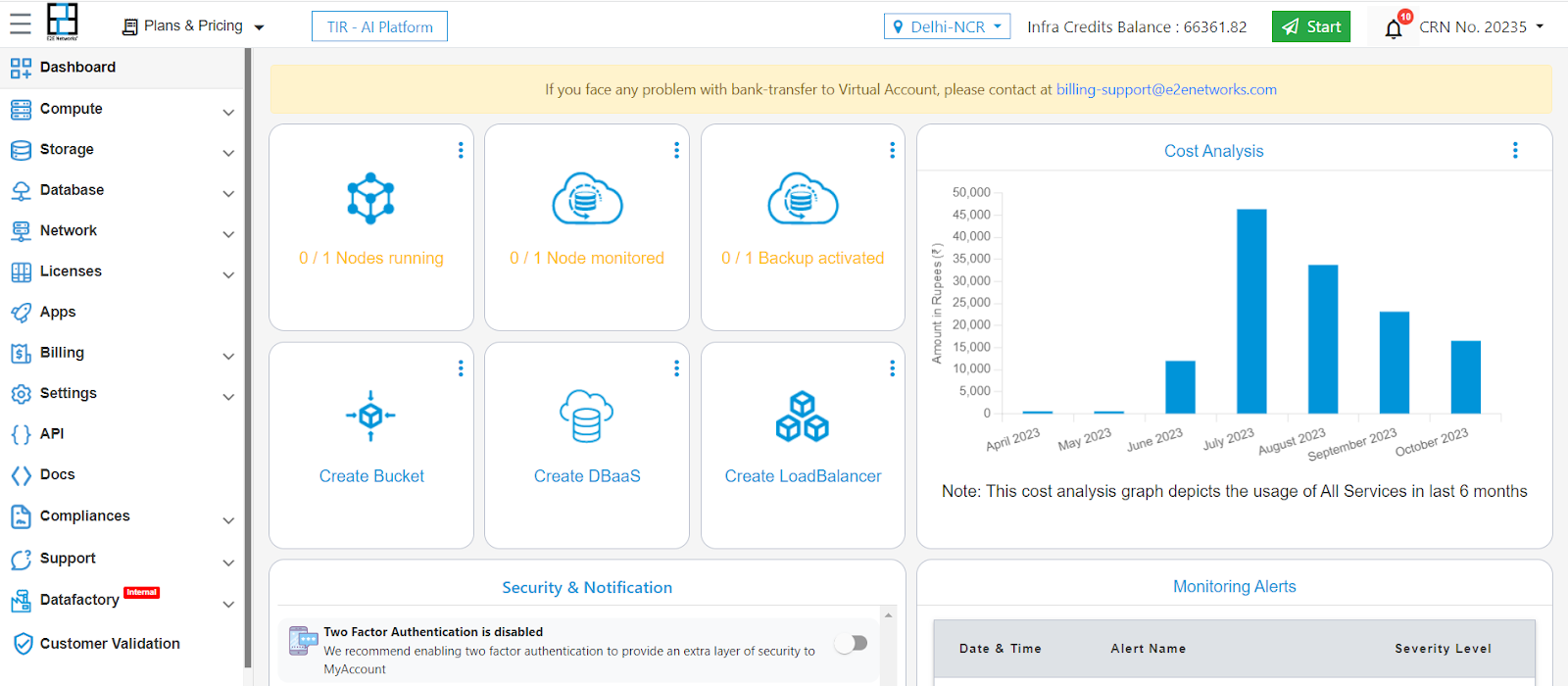

If you face issues with computing, you can always use the cloud computing solutions offered at e2enetworks.com.

Here are the steps to launch a node on the E2E website:

Under the settings on the left panel, provide your SSH key.

Conclusion

AI-based video analytics has become integral to our daily lives, offering solutions across various sectors. Its potential applications have grown significantly in recent years, making processes more efficient and cost-effective. From smart cities to healthcare and retail, AI-powered video analytics enhances operations and security. To explore the possibilities and benefits of this technology further, consider consulting with experts in the field. The future of video analytics is undoubtedly promising, and it continues to evolve as AI advances.