What Is SaulLM-7B?

SaulLM is a first-of-its-kind large language model designed and trained specifically for the legal domain. It is built upon the architecture of the Mistral 7B model.

The model understands the intricacies of legal language better than general purpose LLMs, making it adept at responding to user queries about various legal tasks.

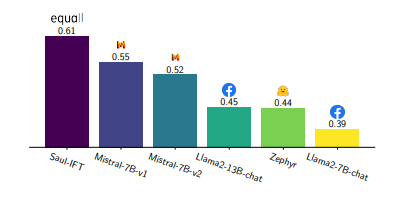

Comparison of SaulLM with other models on a legal benchmark

The development of SaulLM involved a two-step process: continued pre-training on a legal corpus and instruction fine-tuning with a blend of general and legal instructions.

Dataset and Pre-training:

To capture the nuances of legal language across diverse jurisdictions, a 30-billion token dataset was compiled. This included:

- Legal Corpus: Publicly available legal datasets: FreeLaw, MultiLegal Pile, English EuroParl, GovInfo, Law Stack Exchange, Open Australian Legal Corpus, EU Legislation, UK Legislation, Court Transcripts, and UPSTO.

- Replay Sources: Wikipedia, StackExchange, and GitHub to mitigate catastrophic forgetting.

- Instruction Sources: Super natural Instructions and FLAN collection to enhance instruction following capabilities.

Instruction Fine-tuning: The Mistral 7B model was further trained on the cleaned legal corpus, enhancing its understanding of legal language and concepts. Instruction Fine-tuning included:

- Instruction Mix: A combination of general and legal instructions was used to fine-tune the model.

- General Instructions: SlimOrca, Meta Math Question Answering Instructions, General Conversations from UltraChat, and Code Instructions from Glaive Code Assistant v2.

- Legal Instructions: Synthetically generated conversations addressing fundamental legal competencies across various legal document types. These were created using a Mistral-7B-Instruct model to transform legal texts and metadata into coherent dialogues.

Making a Streaming Law Chatbot with SaulLM-7B, Ollama, and Gradio

The SaulLM 7B requires around 7.7GB of GPU memory to operate. We can launch a T4 GPU node on https://myaccount.e2enetworks.com. That would suffice for our Chatbot application.

We’ll use Ollama to run our LLM. Install it with the following command.

curl -fsSL https://ollama.com/install.sh | sh

This will launch a server on localhost:11434. Then pull the SaulLM model.

ollama pull adrienbrault/saul-instruct-v1:Q8_0

Install the following dependencies from your Juptyer Notebook.

pip install ollama gradio

Then we’ll write functions to be used in the Gradio UI. The first function takes in a user message and the chatbot’s history as input, and returns an empty string and chatbot history appended with the user message.

The second function takes in the chatbot’s history as input and modifies it by adding the LLM’s response. Instead of returning the response as a single text string, it streams it token by token. This makes the response more human friendly since users don’t have to wait for the results, and can start reading it as soon as the LLM starts producing the text.

from ollama import Client

client = Client(host='http://localhost:11434')

def user(user_message, history):

return "", history + [[user_message, None]]

def bot(history):

query = history[-1][0]

history[-1][1] = ""

try:

for token in client.chat(model='adrienbrault/saul-instruct-v1:Q8_0', messages=[{'role': 'user', 'content': query}], stream=True):

# Use yield to stream the response

history[-1][1] += token['message']['content']

yield history

except Exception as e:

# Stop the stream and handle the exception if necessary

yield str(e)

Below is the code for the Gradio UI. Once the user submits the message, the user function is called, and it returns an empty string clearing the user message. It also prints the chatbot history on the screen which is currently only the user message.

Next, it calls the bot function which adds the response from the LLM to the chatbot history and prints it on the screen token by token.

import gradio as gr

with gr.Blocks() as demo:

chatbot = gr.Chatbot()

msg = gr.Textbox()

clear = gr.Button("Clear")

msg.submit(user, [msg, chatbot], [msg, chatbot], queue=False).then(

bot, chatbot, chatbot

)

clear.click(lambda: None, None, chatbot, queue=False)

demo.queue()

demo.launch(share=True)

Results

Here’s a screenshot of our Chatbot in action:

We asked it a legal question to test it out.

Question: How does the 'doctrine of equivalents' apply in patent infringement cases across different jurisdictions, and what are the implications of this doctrine for international patent enforcement?

Response: The doctrine of equivalents is a legal principle used to determine patent infringement when a product or process is not an exact copy of the patented invention. This doctrine allows courts to find that two products or processes are equivalent if they perform substantially the same function in substantially the same way, even if there are minor differences in their design or structure.

The application of the doctrine of equivalents varies across different jurisdictions. In some countries, like the United States and Canada, this doctrine is interpreted broadly, allowing for a more flexible approach to patent infringement cases. In contrast, other countries, such as the European Union, have a stricter interpretation of the doctrine, requiring a closer resemblance between the accused product or process and the patented invention.

The implications of this doctrine for international patent enforcement are significant. When a patent holder files an infringement case in a jurisdiction with a broad interpretation of the doctrine of equivalents, it may be easier for them to win the case, even if there are minor differences between the accused product or process and the patented invention. This can lead to more expensive and complex litigation processes across different countries, as patent holders may need to file cases in multiple jurisdictions with varying interpretations of this doctrine.

In addition, the doctrine of equivalents can have an impact on trade and international business relations. When a company is accused of infringing a patent in one jurisdiction but not another due to differences in the interpretation of this doctrine, it may face inconsistent legal outcomes in different countries. This can lead to confusion and uncertainty for businesses operating across borders, as they must navigate complex patent laws and interpretations in various jurisdictions.

To address these issues, there have been efforts to harmonize patent laws and the application of the doctrine of equivalents across different jurisdictions. For example, the Agreement on Trade-Related Aspects of Intellectual Property Rights (TRIPS) established by the World Trade Organization (WTO) provides a minimum standard for the protection of intellectual property rights, including patents. However, countries retain the right to interpret and apply the doctrine of equivalents in their own legal systems, leading to variations in practice across different jurisdictions.

In conclusion, the doctrine of equivalents plays an important role in patent infringement cases across different jurisdictions, with varying interpretations that can impact international patent enforcement and trade relations. The harmonization of patent laws and the interpretation of this doctrine remains a challenge for businesses operating across borders, as they must navigate complex legal systems to protect their intellectual property rights.

Question2: In light of the California Consumer Privacy Act (CCPA), how do the obligations for businesses regarding consumer data privacy compare between California and the standards set by the General Data Protection Regulation (GDPR) in the European Union, and what are the specific challenges that businesses might face when trying to comply with both regulatory frameworks?

Response: The obligations for businesses regarding consumer data privacy under the CCPA and GDPR differ in several ways. Under the CCPA, consumers have the right to know what personal information is collected about them, the right to request that their personal information be deleted, the right to opt-out of the sale of their personal information, and the right to equal service and price, even if they exercise their privacy rights. The GDPR, on the other hand, grants individuals more extensive rights over their data, including the right to access, rectify, erasure, restrict processing, object to processing, data portability, and the right not to be subject to automated decision-making or profiling.

One of the specific challenges businesses face when trying to comply with both regulatory frameworks is the differences in definitions and scopes. For example, the CCPA applies to for-profit entities that collect personal information from California residents, while the GDPR applies to controllers and processors established in the EU or targeting data subjects within the EU. Additionally, the scope of the GDPR is broader than the CCPA, as it covers all personal data, while the CCPA focuses on personal information.

Another challenge businesses face is the potential overlap in some obligations, such as the right to access and rectify personal data. While both regulations require businesses to provide consumers with information about their personal data processing practices, there are differences in how these rights are exercised, which could lead to confusion for businesses trying to comply with both frameworks.

Furthermore, the CCPA requires that businesses provide a clear and conspicuous link on their homepage titled "Do Not Sell My Personal Information," while the GDPR requires that businesses provide a link entitled "Privacy Policy" in the footer of their website. This difference in terminology could also lead to confusion for businesses trying to comply with both frameworks.

In summary, the obligations for businesses regarding consumer data privacy under the CCPA and GDPR differ in several ways, and businesses face challenges when trying to comply with both regulatory frameworks due to differences in definitions and scopes, overlapping obligations, and terminology.