Benefits of Using DSPy for Building Multistage Agents

DSPy is a handy framework that reduces the effort involved in prompt engineering for building AI applications. It achieves this by providing modules to structure the prompts and optimizers that tweak the prompts based on example data.

When building multistage LLM-based agents, DSPy makes it extremely convenient to design the workflow. Multistage agents typically require extensive prompt-tweaking to work well, and without DSPy, this can become complicated and messy. Each step requires manually engineering the prompts until the desired results are achieved, and then ensuring that these steps work together.

DSPy’s modules and optimizers automatically handle the prompting steps. Moreover, this approach is LLM-agnostic, meaning that if the LLM changes, you don’t have to rewrite your prompts to fit the new LLM’s template. Instead, DSPy will automatically adjust for you.

This is extremely useful because it allows you to use any Large Language Model you prefer without worrying about re-engineering the prompt templates.

In this blog, we’ll design a multistage Personal Styling Agent that gives users styling advice based on their preferences.

Personalized Styling Agent - Workflow

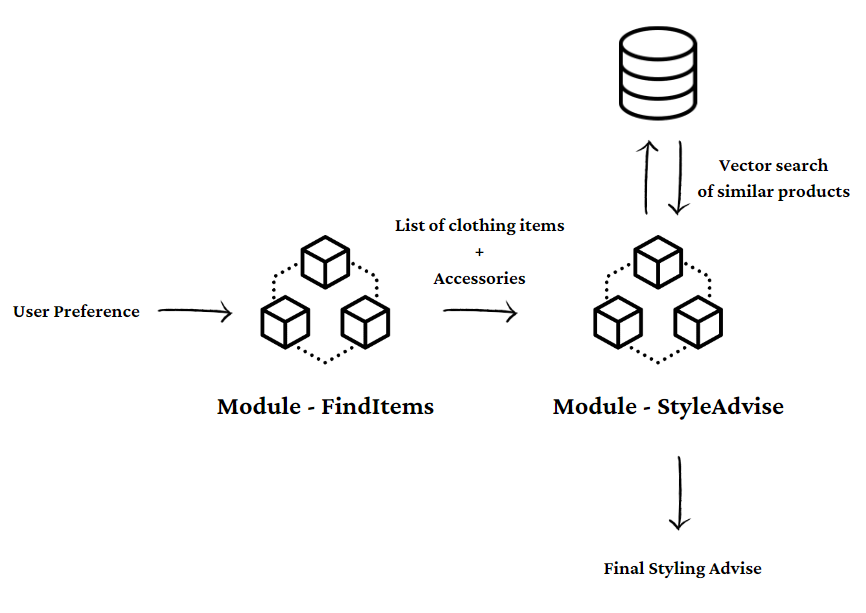

We’ll break our agent into two modules. The first module will generate a list of clothing items and accessories based on user preferences.

We’ll also store a list of products separately in the Qdrant Vector Database. The second module will fetch products from this vector database that are similar to the items generated by the first module. It will then generate styling advice based on the fetched list of products.

This way, users receive advice based on the available list of products. This feature can be useful for e-commerce companies that are looking to provide product recommendations to users based on their preferences.

Build with E2E Cloud

This blog is a part of the ‘Build with E2E Cloud’ series. E2E Networks is an NSE-listed, AI-first hyperscaler that provides accelerated cloud computing solutions, including cutting-edge cloud GPUs like the A100 and H100.

E2E Cloud also provides a comprehensive and robust AI development platform called TIR for various machine learning and artificial intelligence operations. The TIR platform provides a suite of tools to develop, deploy, and manage AI models effectively.

Head over to https://myaccount.e2enetworks.com/ to know more about E2E’s services!

Let’s Code

Since we are going to be using Llama3 for this project, we recommend that you use the A100 or H100 node on E2E cloud.

Begin by installing the dependencies:

dspy-ai

ollama

qdrant-client

fastembed

Initialize the vector database. You can also use the Qdrant instance offered by TIR.

from qdrant_client import QdrantClient

# Initialize the client

client = QdrantClient(":memory:")

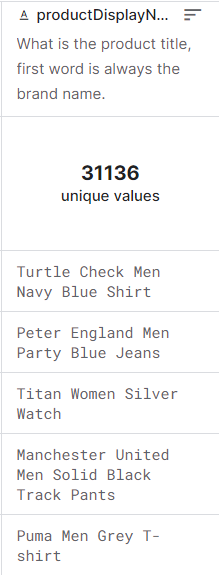

We’ll use a fashion product dataset from Kaggle. More specifically, we’ll be vectorizing one column from this dataset. This column contains the names of different products. This is how it looks like:

import pandas as pd

# Read the CSV file

df = pd.read_csv('styles.csv', on_bad_lines='skip')

# Extract the 'productDisplayName' column into a list

product_display_names = df['productDisplayName'].astype(str).tolist()

We’ll now insert the vector data in batches of 1000.

# Function to split list into batches of specified size

def batch_list(lst, batch_size):

for i in range(0, len(lst), batch_size):

yield lst[i:i + batch_size]

# Define batch size

batch_size = 1000

# Process the batches

for idx, batch in enumerate(batch_list(product_display_names, batch_size)):

start_id = idx * batch_size + 1

end_id = start_id + len(batch) - 1

doc_ids = list(range(start_id, end_id + 1))

client.add(

collection_name="product_list",

documents=batch,

ids=doc_ids,

)

Now we’ll configure DSPy to include the Qdrant Retriever and the LLM Llama3.

from dspy.retrieve.qdrant_rm import QdrantRM

import dspy

qdrant_retriever_model = QdrantRM("product_list", client, k=3)

ollama_model = dspy.OllamaLocal(model="llama3",model_type='text',

max_tokens=350,

temperature=0.1,

top_p=0.8, frequency_penalty=1.17, top_k=40)

dspy.settings.configure(lm= ollama_model, rm=qdrant_retriever_model)

Make sure you have Ollama installed on your system. You can do so by following the instructions here.

curl -fsSL https://ollama.com/install.sh | sh

You can start the server by running:

ollama serve

Now pull llama3.

ollama pull llama3

Alternatively, you can deploy Llama3 endpoint on TIR, and use that as well.

Now we’ll write a signature for our first module to generate a list of clothing items and accessories.

class Items(dspy.Signature):

"""

Extract clothing items and accessories given user preference

"""

prompt = dspy.InputField()

terms = dspy.OutputField(format=list)

In DSPy, prompts are structured as signatures. The doc string contains the instruction of the prompt. The variable names (‘prompt’ and ‘terms’) are important as they will be passed onto the LLM. So make sure your variable names are meaningful.

The call to the LLM happens in the modules. We’ll use a module called dspy.Predict which simply predicts the outcome given a prompt. You can check out the list of DSPy modules here.

class FindItems(dspy.Module):

def init(self):

super().init()

self.item_extractor = dspy.Predict(Items)

def forward(self, question):

instruction = (

"Return a list of clothing items and accessories to be worn by a user, given their preferences"

f"Preferences: {question}"

)

prediction = self.item_extractor(

prompt=instruction

)

return prediction.terms

Now that we have this module written, let’s optimize it. We’ll first create a trainset which contains a sample of how we want our outputs to look like.

trainset_dict= {

"desert_wear": "* Lightweight, breathable long-sleeve shirt\n* Wide-brimmed hat\n* Sunglasses with UV protection\n* Cargo pants\n* Lightweight scarf or shemagh\n* Hiking boots with good ventilation\n* Moisture-wicking socks\n* Sunscreen\n* Hydration pack or water bottle\n* Lightweight jacket for cooler evenings",

"caribbean_party": "* Flowy sundress with tropical print\n* Beach hat with colorful feathers\n* Statement necklace with seashells and beads\n* Flip flops in bright colors\n* Sunglasses with brightly colored frames\n* Lightweight, short-sleeve shirt with floral patterns\n* Comfortable shorts\n* Colorful bangles or bracelets\n* Straw tote bag\n* Lightweight scarf or wrap",

"award_function": "* Elegant evening gown or tuxedo\n* Statement jewelry (earrings, necklace, bracelet)\n* Dress shoes or heels\n* Clutch or small evening bag\n* Cufflinks and tie (for tuxedo)\n* Evening makeup and hairstyle\n* Silk scarf or shawl\n* Formal watch\n* Perfume or cologne\n* Pocket square (for tuxedo)"

}

The keys of this dictionary represent the user preferences and the values represent the desired output by the LLM.

Let’s wrap it around in a format that is suitable to work with DSPy.

trainset = [dspy.Example(question=key, answer=value) for key, value in trainset_dict.items()]

Then we optimize it.

from dspy import teleprompt

teleprompter = teleprompt.LabeledFewShot()

optimized_Find_Items = teleprompter.compile(student=FindItems(), trainset=trainset)

Let’s put our optimized module to test.

response = optimized_Find_Items("party wear")

print(response)

Here is the extracted list of clothing items and accessories:

Party Wear

* Sequined dress

* High heels (black or red)

* Statement necklace with rhinestones

* Earrings with dangling crystals

* Sparkly clutch purse (silver or gold)

* Cocktail ring with gemstones

As we can see, we are getting our output in the format we’d asked for. Now, we’ll write a parsing function that collects the names of these items into a list.

def parse_clothing_items(text):

# Split the text into lines

lines = text.split('\n')

# Initialize an empty list to hold the items

items = []

# Loop through each line

for line in lines:

# Check if the line starts with ''

if line.strip().startswith(' '):

# Remove the '*' and any leading/trailing whitespace, then add to the list

item = line.strip()[2:].strip()

items.append(item)

return items

Next we write the final module, which receives the list of items from the first module, does a vector search to find a list of similar products, and then generates styling advice based on the list.

class Style(dspy.Signature):

"""

Generate styling advice, given these products

"""

products = dspy.InputField()

answer = dspy.OutputField()

class Styling_Advise(dspy.Module):

def init(self):

super().init()

self.generate_response = dspy.Predict(Style)

self.retrieve = dspy.Retrieve(k=2)

def forward(self, question):

item_list= parse_clothing_items(optimized_Find_Items(question))

product_list = []

for item in item_list:

product_list += self.retrieve(item).passages

response = self.generate_response(products= ', '.join(product_list))

return response.answer

This final module serves as a complete agent - with just one call, it does the entire job of coming up with a list of items, retrieving similar products from the vector DB, and finally generating the styling advice.

Let’s test it out.

module = Styling_Advise()

response = module("Corporate Award Ceremony")

Products: Aneri Women Black & Maroon Salwar Suit, Aneri Women Blue & White Salwar Suit, United Colors of Benetton Women White Shirt, Park Avenue Red Patterned Tie, Park Avenue Blue Patterned Tie, Basics Men Black Blazer Jacket, Basics Men Beige Blazer Jacket, Black Coffee Brown Formal Trousers, Basics Men Navy Trousers, ADIDAS Men Black Socks, Parx Men Black Socks, Woodland Men Brown Leather Shoes, Clarks Brown Leather Casual Shoes, Being Human Men Black Dial Orange Strap Watch, Being Human Men Black Dial Steel Plated Strap Watch, Revv Men Black and White Cufflinks, Belmonte Men Silver Cufflinks

Answer:

For the women's products (Aneri Salwar Suits):

* Pair the black & maroon salwar suit with a simple white or light-colored blouse to let the outfit shine.

* For a more formal look, pair the blue & white salwar suit with a crisp white shirt and minimal jewelry.

For men's accessories:

* The Basics Men Black Blazer Jacket is versatile - wear it with navy trousers for a classic look or beige trousers for a more relaxed vibe. Pair it with either ADIDAS or Parx black socks.

* For the Park Avenue ties, pair the red patterned tie with white dress shirt and dark-colored trousers (black coffee brown formal trousers work well). The blue patterned tie looks great with light-colored shirts and navy trousers.

For footwear:

* Woodland Men Brown Leather Shoes are perfect for a more casual look. Pair them with beige or light-colored pants.

* Clarks Brown Leather Casual Shoes can be dressed up or down, depending on the occasion. Try

Let’s try out another prompt:

response = module("wedding occasion")

print(response)

Products: Avirate Black Formal Dress, French Connection Teal Dress, s.Oliver Women's White Blouse Top, United Colors of Benetton Men Woven Black Tie, Enroute Women Casual Taupe Heels, Enroute Women Casual Taupe Heels, Ivory Tag Women Colour Interplay Multicolour Necklace, Ivory Tag Women Colour Beam Multicolour Necklace, French Connection Teal Dress, FNF Red Bridal Collection Lehenga Choli, Streetwear Satin Smooth Brides Mate Lipcolor 05, Jacques M Women Be Gracious Deo

Answer:

For a stylish and cohesive look with these products:

* Pair the Avirate Black Formal Dress with Enroute Women Casual Taupe Heels for a chic evening outfit. Add Ivory Tag Women Colour Interplay Multicolour Necklace to add some sparkle.

* The French Connection Teal Dress looks stunning on its own, but you can also pair it with United Colors of Benetton Men Woven Black Tie as an unexpected yet stylish combination.

* For a more casual look, team the s.Oliver Women's White Blouse Top with Enroute Women Casual Taupe Heels and Ivory Tag Women Colour Beam Multicolour Necklace for a chic everyday outfit.

* The FNF Red Bridal Collection Lehenga Choli is perfect for a special occasion. Pair it with Streetwear Satin Smooth Brides Mate Lipcolor 05 to complete the look.

* For men, the United Colors of Benetton Men Woven Black Tie can be paired with any formal attire.

Remember, accessorizing wisely and mixing high and low pieces can create a unique and stylish outfit that reflects your personal taste.

Final Words

DSPy’s signatures, modules, and optimizers provide a powerful method for building a multistage agent that moves away from traditional prompt engineering. In this blog, we learned how to build a multistage Personal Styling Agent using DSPy’s unique framework.

By taking inspiration from the above technique, you can design similar agents to provide assistance with activities like financial advising, travel planning, healthcare advice, and a variety of other use cases.

You can always rent a cloud GPU on E2ENetworks or you can deploy your entire stack on their TIR AI platform, which comes with a set of tools, containers, and readymade endpoints that can assist you with your AI development.

References

https://towardsdatascience.com/building-an-ai-assistant-with-dspy-2e1e749a1a95